Deepfakes have become a huge issue lately, especially when it comes to revenge porn. Imagine seeing a video of yourself doing something you never did—it’s terrifying. Now, victims are taking a stand against Meta, claiming the company didn’t do enough to stop these fake videos from spreading. They’re suing for a whopping $10 billion. This case isn’t just about money; it’s about holding tech giants accountable in a world where AI can create pretty much anything. As this unfolds, it raises big questions about privacy, security, and the laws meant to protect us.

Key Takeaways

- Deepfake technology is rapidly advancing, making it easier to create realistic fake videos.

- Victims of deepfake revenge porn are suing Meta for $10 billion, accusing the company of not doing enough to prevent the spread of harmful content.

- Cyberstalking laws are being tested and challenged by the rise of deepfake technology, highlighting the need for updated legislation.

- Meta faces serious allegations and legal challenges, which could impact its reputation and operations.

- The lawsuit against Meta could set a precedent for how tech companies are held accountable for AI-generated content.

Understanding Deepfake Technology and Its Implications

The Rise of AI-Generated Content

Lately, we’ve seen a surge in AI-generated content, and it’s both mind-blowing and a bit worrying. Deepfakes are at the heart of this tech wave, creating hyper-realistic but fake images, videos, or audio. What’s wild is how easy it is to make these now. With just a computer and some software, anyone can whip up content that looks almost real. This tech is not just a toy; it’s reshaping how we think about truth and authenticity.

How Deepfakes Are Created

Creating a deepfake involves a few steps, but the gist is pretty straightforward:

- Gather a bunch of images or footage of the person you want to mimic.

- Use AI algorithms to analyze and learn from these images.

- Generate a new, fake video or audio clip where the person appears to say or do something they never did.

The magic lies in the AI’s ability to learn and recreate facial expressions and voice patterns, making the fake content eerily convincing.

Impacts on Privacy and Security

Deepfakes bring up serious privacy and security concerns. Imagine someone making a fake video of you doing something embarrassing or illegal. It’s a nightmare scenario. These fakes can be used for blackmail, misinformation, or just plain old harassment. The tech world is buzzing about how to deal with this, but it’s a tough nut to crack.

Deepfakes aren’t just a tech issue; they’re a societal challenge. They force us to rethink our relationship with digital content and the trust we place in what we see and hear.

We need to stay sharp and informed about Deepfakes utilize AI to tackle these challenges head-on. It’s not just about tech; it’s about protecting ourselves and our communities.

The Legal Landscape Surrounding Deepfakes

Current Cyberstalking Laws

Alright, let’s dive into the world of cyberstalking laws. These laws are like our first line of defense against the wild west of the internet. They aim to protect folks from being harassed or stalked online. But when it comes to deepfakes, things get a bit tricky. You see, most of these laws were crafted before the rise of AI-generated content, so they don’t always cover the unique challenges posed by deepfakes. This gap leaves victims vulnerable and often without legal recourse. It’s like trying to fit a square peg in a round hole.

Recent Legislation in California

California’s been making waves with its recent legislation aimed at tackling deepfakes. They’ve passed laws that specifically target deepfakes in both political and pornographic contexts. One law gives people the right to sue if they’re placed in porn without their consent. Another bans political deepfakes during election seasons. It’s a step forward, but there’s still a long way to go. These laws are a reaction to the growing concern over how deepfakes are being used to harm individuals and manipulate public opinion.

Challenges in Enforcing Laws

Now, enforcing these laws? That’s a whole different ball game. The digital landscape is vast and ever-changing, making it tough for law enforcement to keep up. Plus, the anonymity of the internet allows perpetrators to hide behind screens, making it difficult to track them down. There’s also the challenge of jurisdiction. If a deepfake is created in one country and affects someone in another, whose laws apply? It’s a legal puzzle that we’re still trying to solve.

The intersection of technology and law is a complex dance, requiring constant adaptation to new challenges. As deepfake technology evolves, so too must our legal frameworks to ensure protection and justice for all.

Victims of Deepfake Revenge Porn Speak Out

Personal Stories of Impact

When it comes to deepfake revenge porn, the stories are both heartbreaking and infuriating. Imagine waking up one day to find your face plastered on explicit content you never consented to. It’s a nightmare that many victims live through. These deepfakes are not just images or videos; they are invasions of privacy that can destroy lives. Victims often feel violated and powerless, as these digital manipulations can spread like wildfire, making it nearly impossible to regain control over their own image.

Psychological and Emotional Consequences

The emotional toll of being a victim of deepfake revenge porn is immense. Victims often experience anxiety, depression, and a loss of trust in those around them. The shame and embarrassment can be overwhelming, leading to social withdrawal and even suicidal thoughts. It’s not just about the images themselves but the constant fear of being recognized or judged by others. This violation leaves scars that are not easily healed.

Support and Resources for Victims

Thankfully, there are resources and support systems available for those affected by deepfake revenge porn. Victims can reach out to organizations that specialize in digital privacy and seek legal advice to help navigate this challenging situation. Here are some steps victims can take:

- Contact legal professionals who specialize in cybercrimes.

- Reach out to support groups that provide emotional and psychological assistance.

- Educate oneself about privacy settings and digital footprints to prevent future incidents.

We must continue to raise awareness and push for stronger laws and protections to help those who have been affected by this devastating misuse of technology. It’s crucial that we stand together to support victims and hold those responsible accountable.

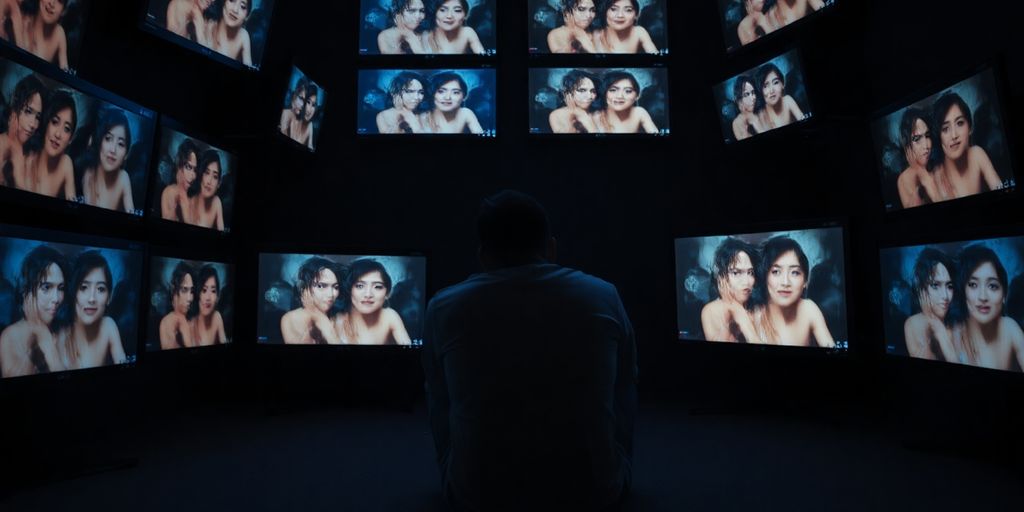

Meta’s Role in the Deepfake Controversy

Allegations Against Meta

So, here’s the deal with Meta. They’ve been slapped with some pretty serious accusations. Victims claim that Meta’s platforms have been used to spread deepfake revenge porn. The big issue? They say Meta didn’t do enough to stop it. It’s like, if you’re running a massive social media site, shouldn’t you have some serious checks in place to catch this stuff?

Meta’s Response to the Lawsuit

Now, Meta isn’t just sitting back and taking these hits. They’ve come out saying they’re committed to fighting the misuse of their platforms. They’ve got AI tools, they say, to detect and remove harmful content. But, folks are questioning if these measures are enough or just a PR move. Trust is a big word here, and people are wondering if they can trust Meta to handle this right.

Impact on Meta’s Reputation

This lawsuit is a big deal, and it’s definitely shaking things up for Meta. People are talking, and not in a good way. Investors, users, everyone is watching closely. If Meta doesn’t handle this right, it could seriously dent their image. And let’s be real, in the tech world, reputation is everything.

The challenge for Meta is not just in addressing the allegations but in rebuilding trust with its users. It’s a pivotal moment that could define the company’s future approach to privacy and security.

The $10 Billion Lawsuit Against Meta

Details of the Legal Case

Alright, so here’s the scoop. Victims of deepfake revenge porn have come together to file a massive $10 billion lawsuit against Meta. This isn’t just about money; it’s about holding a tech giant accountable for the misuse of AI-generated content on its platforms. The lawsuit alleges that Meta failed to prevent the spread of harmful deepfakes, causing significant distress to those affected.

Potential Outcomes and Implications

What could happen if the court sides with the victims? Meta could face a monumental financial hit and might be forced to implement stricter controls over content shared on its platforms. This case could set a precedent, influencing how tech companies manage AI-generated content and protect users from digital harm.

Reactions from Legal Experts

Legal experts are buzzing about this case. Some say it’s a wake-up call for tech companies, while others believe it’s a stretch to hold Meta responsible for content created by third parties. There’s a lot of debate over what this lawsuit means for the future of AI regulation and the responsibilities of tech platforms.

This lawsuit isn’t just about money; it’s a battle for accountability in an age where technology often outpaces the law.

As Georgia Harrison emphasizes, platforms need to actively combat deepfake intimate image abuse to prevent such content from being published. This case could be a pivotal moment in that fight.

The Ethics of AI and Deepfake Technology

Moral Questions Surrounding AI Use

Alright, let’s chat about the moral maze of AI. We’ve got this amazing tech that can do wonders, but it also raises some big ethical flags. Is it right to create something that can mimic human behavior so closely? That’s a question we need to ask ourselves. AI, especially deepfake tech, can be a double-edged sword. On one hand, it can entertain and educate, but on the other, it can deceive and harm. The rise of sophisticated deepfakes presents significant ethical concerns and challenges regarding legal accountability for their potential misuse.

The Responsibility of Tech Companies

Now, who should be responsible for keeping AI in check? Tech companies, of course! They create these tools, so they’ve got to ensure they’re not misused. But how do they do that? Well, it’s not as simple as flipping a switch. Companies need to set strict guidelines and maybe even some self-imposed rules. They should be transparent about how their AI works and what it’s capable of. This way, we can trust that they’re not just unleashing chaos into the world.

Public Perception and Trust

Let’s be real, folks are wary of AI. It’s new, it’s powerful, and it can be a little creepy. Building trust is crucial. How do we do that? By being open and honest. If people know what AI can and can’t do, they’re more likely to accept it. Transparency from tech companies is key here. They need to show they’re using AI responsibly and not just for profit. It’s all about making sure people feel safe and informed about the tech that’s becoming part of their daily lives.

As AI continues to evolve, we must balance innovation with ethical considerations to ensure that technology serves humanity positively.

Cyberstalking Laws and Their Effectiveness

Analysis of Current Laws

Alright, folks, let’s dive into the nitty-gritty of cyberstalking laws. So, here’s the deal: cyberstalking is a big issue, with more folks getting harassed online every day. But are the laws keeping up? Not really. Current cyberstalking laws are like a patchwork quilt, with each state having its own rules. Some states have robust laws, while others barely scratch the surface. This inconsistency makes it tough to protect victims effectively.

Case Studies of Cyberstalking

Let’s look at some real-life cases to see how these laws play out. Imagine a scenario where someone is being stalked online, receiving threatening messages, and having their privacy invaded. In states with strong laws, the perpetrator might face serious charges, but in others, the victim might struggle to get any legal support.

- Case A: A victim in California, where laws are stronger, manages to get a restraining order and sees their stalker prosecuted.

- Case B: Meanwhile, in another state with weaker laws, a victim might find their case dismissed due to lack of evidence.

- Case C: On the federal level, only severe cases make it to court, leaving many victims without justice.

Proposed Changes to Legislation

We need to talk about what can be done to fix this mess. First off, there should be a push for more uniform laws across states. This would make it easier for victims to seek help no matter where they live. A national standard could ensure that all victims have access to the same level of protection.

- Standardization: Implementing a consistent legal framework across the country.

- Awareness Campaigns: Educating the public about their rights and how to report cyberstalking.

- Resource Allocation: Providing more resources for law enforcement to handle these cases effectively.

In a world where digital interactions are the norm, it’s crucial that our legal systems evolve to protect individuals from online threats. Cyberstalking isn’t just a digital nuisance; it’s a real-world problem that demands serious attention and action.

In conclusion, while the current laws have made some strides, there’s a long way to go to ensure everyone feels safe online. It’s time for lawmakers to step up and make the necessary changes.

The Future of AI Regulation

Predictions for AI Legislation

Alright, so let’s chat about where AI regulation is headed. Right now, it’s like the Wild West out there. Everyone’s trying to figure out how to keep things in check without stifling innovation. Federal AI legislation is expected to remain limited, which means states might step up with their own rules to fill the gap. It’s like a patchwork quilt of laws, each one a little different from the next. Some folks are predicting more comprehensive laws in the next few years, but who knows? It’s a bit of a guessing game at this point.

Balancing Innovation and Safety

Here’s the tricky part: we want AI to keep growing and doing awesome stuff, but we don’t want it to turn into a sci-fi nightmare. It’s all about finding that sweet spot between letting tech companies innovate and making sure we’re all safe. AI regulation is like walking a tightrope. Too much control, and we risk slowing down progress. Too little, and we might end up with some serious issues on our hands. It’s a balancing act that lawmakers are still trying to figure out.

Global Perspectives on AI Laws

Looking at the big picture, different countries have their own takes on AI regulation. Some are all in, crafting strict laws to keep things in check. Others are more laid-back, taking a “wait and see” approach. This global mix can make things a bit messy, especially for companies operating internationally. But it’s also a chance to learn from each other and maybe even come up with a unified approach someday. Until then, it’s a bit of a free-for-all, with each nation doing its own thing.

We’re in uncharted territory with AI regulation. It’s a balancing act between innovation and safety, with each country trying to find its own path. The future is uncertain, but one thing’s for sure: AI isn’t going anywhere, and neither is the need for smart regulation.

Public Awareness and Education on Deepfakes

The Importance of Digital Literacy

So, deepfakes are everywhere these days, right? We really need to get a grip on what they are and how they work. Digital literacy is super important because it helps us spot these fake videos and images that can mess with our heads. Imagine watching a video of a famous person saying something crazy, and it’s all fake! We need to teach everyone, especially kids, how to tell what’s real and what’s not.

Educational Initiatives and Campaigns

There’s a bunch of programs out there trying to help people understand deepfakes. Schools are starting to include lessons on digital media literacy, which is awesome. Plus, there are campaigns on social media that spread the word about the challenges deepfakes pose. These efforts aim to make sure we’re not just sitting ducks when someone tries to fool us with a fake video.

How to Identify Deepfakes

Alright, so spotting a deepfake isn’t always easy, but there are some tricks we can use:

- Check the Source: If something seems off, see where it came from. Is it a reliable source?

- Look for Weird Stuff: Sometimes deepfakes have glitches, like weird eye movements or strange lighting.

- Trust Your Gut: If it feels fake, it might be. Don’t just believe everything you see.

“In a world where seeing is no longer believing, understanding the tools and techniques of deception is our best defense.”

Learning about deepfakes and how to spot them is key to staying savvy in this digital age. Let’s make sure we’re all in the know and not fooled by these digital tricks.

The Intersection of Technology and Privacy Rights

Privacy Concerns in the Digital Age

In today’s tech-driven world, our personal information is more exposed than ever. From social media to online shopping, every click can leave a digital footprint. Privacy concerns have skyrocketed, especially with the rise of deepfakes and AI-generated content. These technologies can manipulate images and videos, creating false realities that can harm reputations and invade personal lives. It’s crucial that we understand how our data is being used and take steps to protect it.

Legal Protections for Individuals

To combat these privacy issues, there are laws in place, but they’re often playing catch-up with technology. Laws like GDPR in Europe and CCPA in California aim to give individuals more control over their personal data. Yet, enforcing these laws is challenging, especially when dealing with cross-border data flows and multinational companies. The legal system must adapt quickly to ensure that individuals have the necessary protections against the misuse of their data.

The Role of Tech Companies in Safeguarding Privacy

Tech companies have a huge responsibility when it comes to protecting user privacy. They collect vast amounts of data, and how they choose to handle it can have significant implications. Companies like Meta are often in the spotlight due to their data practices. It’s essential for these companies to be transparent about their data policies and to implement robust security measures to protect user information.

As technology continues to evolve, our privacy rights must be safeguarded. It’s not just about keeping our data safe, but about ensuring that technology is used ethically and responsibly. We all deserve to know how our information is being used and to have control over it.

The Psychological Impact of Deepfake Technology

Understanding the Emotional Toll

Let’s talk about what deepfakes do to people, emotionally speaking. Imagine waking up and finding your face on a video you never made. This kind of shock isn’t just a minor inconvenience; it can be downright traumatic. Victims often report feelings of anxiety, stress, and even depression. It’s like your identity is stolen, but worse, because it’s out there for everyone to see. The emotional toll is heavy, and it doesn’t just go away overnight.

Coping Mechanisms for Victims

So, what can people do when they find themselves in this nightmare? Here are a few steps:

- Seek Support: Talk to someone you trust. It could be a friend, family member, or a mental health professional.

- Legal Advice: Sometimes, taking legal action can help you regain a sense of control.

- Digital Hygiene: Be cautious about what you share online. It’s not foolproof, but it can help.

The Role of Mental Health Professionals

Mental health pros are crucial here. They provide the tools and support victims need to navigate this mess. Therapy can help process the trauma, and sometimes, just having someone to talk to makes a world of difference. These professionals can guide victims through coping strategies and help them regain their confidence.

When technology messes with our sense of self, it’s not just a digital issue—it’s a deeply personal one. We must remember that our mental health is just as important as our online presence.

Research indicates that unauthorized use of AI clones and deepfakes can result in significant psychological distress, including anxiety and stress, for individuals whose identities are misrepresented. Learn more about the psychological impact.

The Global Response to Deepfake Challenges

International Efforts to Combat Deepfakes

Alright, let’s talk about the worldwide hustle against deepfakes. Countries everywhere are stepping up their game. Some places are rolling out laws to keep this tech in check. Others are cooking up guidelines to help folks spot these fakes. It’s a mixed bag of tricks, but the goal is the same—get ahead of the deepfake game.

Collaborations Between Nations

Here’s the scoop: countries are teaming up to tackle deepfakes. It’s like a global buddy system. They’re sharing intel, tech, and strategies. Why? Because deepfakes don’t care about borders. They’re a global headache. By working together, nations hope to curb the negative impacts of this tech.

The Role of International Law

International law is trying to catch up with deepfakes. It’s a bit of a slow dance, but it’s happening. Some folks think we need new laws to deal with the unique challenges deepfakes bring. Others argue we can tweak existing laws to fit the bill. Either way, the legal world is buzzing with ideas on how to handle this digital beast.

Deepfakes are shaking things up, and the world is responding. It’s a race against time to protect privacy and truth in the digital age.

Looking Ahead: Navigating the Future of AI and Accountability

So, here we are. The lawsuit against Meta is just the beginning of a long road ahead. It’s not just about the money, though $10 billion is no small change. It’s about setting a precedent, making sure tech giants are held accountable for the tools they create. Deepfakes aren’t going away anytime soon, and neither is the debate about privacy and consent in the digital age. California’s new laws are a step in the right direction, but there’s still a lot of work to be done. As AI continues to evolve, we need to keep asking the tough questions. Who’s responsible when things go wrong? How do we protect people from being exploited by technology? It’s a conversation that needs to happen, and it needs to happen now. Let’s hope this case sparks the change we desperately need.

Frequently Asked Questions

What is a deepfake?

A deepfake is a video or image that has been altered using artificial intelligence to make it appear as if someone is doing or saying something they didn’t actually do or say.

How are deepfakes created?

Deepfakes are made using AI technology that swaps faces in videos or images, often using a large amount of data to train the system to mimic someone’s appearance and movements.

Why are deepfakes a problem?

Deepfakes can be used to spread false information, damage reputations, and violate privacy, making them a serious concern for individuals and society.

What laws exist to combat deepfakes?

Some places, like California, have laws allowing people to sue if deepfakes of them are used in porn without consent, and there are bans on political deepfakes during elections.

Can victims of deepfake revenge porn get help?

Yes, victims can seek support from legal experts, mental health professionals, and organizations dedicated to helping those affected by non-consensual content.

What is Meta’s involvement in the deepfake lawsuit?

Meta is being sued for $10 billion by victims who claim the company allowed AI-generated deepfake content to spread on its platforms without proper controls.

How can you spot a deepfake?

Look for unnatural movements, inconsistent lighting, and odd facial expressions. Sometimes, the audio may not sync well with the video.

What can be done to stop deepfakes?

Improving technology to detect deepfakes, creating stricter laws, and raising public awareness about the issue can help mitigate the problem.